Wikipedia at 24

“With more than 250 million views each day, Wikipedia is an invaluable educational resource”.[1]

In light of Wikipedia turning 24 years this (January 15th), and the Wikimedia residency at the University of Edinburgh turning 9 years old this week too, this post is to examine where we are with Wikipedia today in light of artificial intelligence and the ‘existential threat’ it poses to our knowledge ecosystem. Or not. We’ll see.

NB: This post is especially timely given also Keir Starmer’s focus on “unleashing Artificial Intelligence across the UK” on Monday[2][3] and our Principal’s championing of the University of Edinburgh as “a global centre for artificial intelligence excellence, with an emphasis on using AI for public good” this week.

Before we begin in earnest

Wikipedia has been, for some time, preferentialised in the top search results of Google, the number one search engine. And “search is the way we live now” (Darnton in Hillis, Petit & Jarrett, 2013, p.5)…. whether that stays the same remains to be seen with the emergence of chat-bots and ‘AI summary’ services. So it is incumbent on knowledge-generating academic institutions to support staff and students in navigating a robust information literacy when it comes to navigating the 21st century digital research skills necessary in the world today and understanding how knowledge is created, curated and disseminated online.

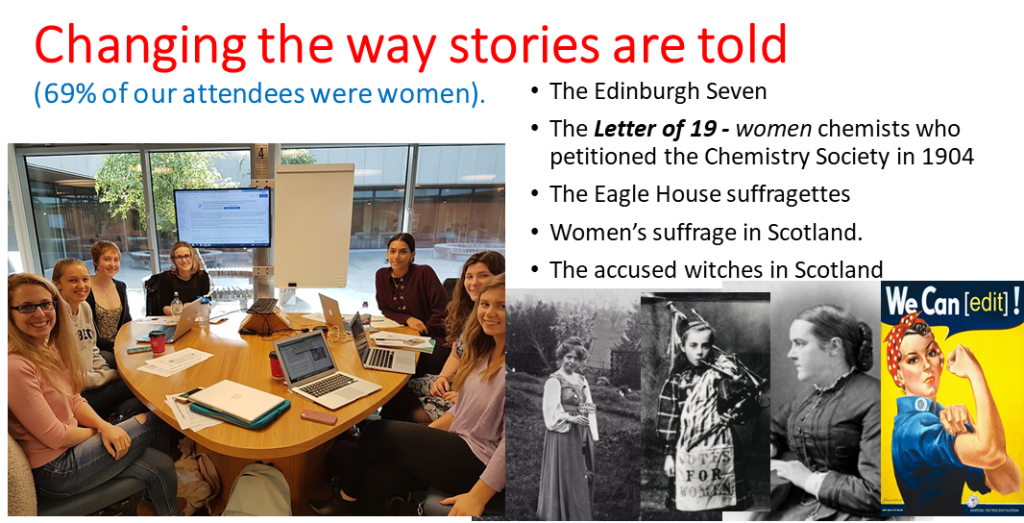

Engaging with Wikipedia in teaching & learning has helped us achieve these outcomes over the last nine years and supported thousands of learners to become discerning ‘open knowledge activists‘; better able to see the gaps in our shared knowledge and motivated to address these gaps especially when it comes to under-represented groups, topics, languages, histories. Better able also to discern reliable sources from unreliable sources, biased accounts from neutral point of view, copyrighted works from open access. Imbued with the critical thinking, academic referencing skills and graduate competencies any academic institution and employer would hope to see attained.

Further reading

Point 1: Wikipedia is already making use of machine learning

ORES

The Wikimedia Foundation has been using machine learning for years (since November 2015). ORES is a service that helps grade the quality of Wikipedia edits and evaluate changes made. Part of its function is to flag potentially problematic edits and bring them to the attention of human editors. The idea is that when you have as many edits to deal with as Wikipedia does, applying some means of filtering can make it easier to handle.

“The important thing is that ORES itself does not make edits to Wikipedia, but synthesizes information and it is the human editors who decide how they act on that information” – Dr. Richard Nevell, Wikimedia UK

MinT

Rather relying entirely on external machine translation models (Google Translate, Yandex, Apertium, LingoCloud), Wikimedia also now has its own machine translation tool, MinT (Machine in Translation) (since July 2023) which is based on multiple state-of-the-art open source neural machine translation models [5] including (1) Meta’s NLLB-200 (2) Helsinki University’s OPUS (3) IndicTrans2 (4) Softcatalà.

The combined result of which is that more than 70 languages are now supported by MinT that are not supported by other services (including 27 languages for which there is no Wikipedia yet).[5]

“The translation models used by MinT support over 200 languages, including many underserved languages that are getting machine translation for the first time”.[6]

Machine translation is one application of AI or more accurately large language models that many readers may be familiar with. This aids with the translation of knowledge from one language to another, to build understanding between different languages and cultures. The English Wikipedia doesn’t allow for unsupervised machine translations to be added into its pages, but human editors are welcome to use these tools and add content. The key component is human supervision, with no unedited or unaltered machine translation permitted to be published on Wikipedia. We made use of the Content Translation tool on the Translation Studies MSc for the last eight years to give our students meaningful, practical published translation experience ahead of the world of work.

Point 2: Recent study finds artificial intelligence can aid Wikipedia’s verifiability

“It might seem ironic to use AI to help with citations, given how ChatGPT notoriously botches and hallucinates citations. But it’s important to remember that there’s a lot more to AI language models than chatbots….”[7]

SIDE – a potential use case

A study published in Nature Machine Intelligence in October 2023 demonstrated that the use of SIDE, a neural network based machine intelligence, could aid the verifiability of the references used in Wikipedia’s articles.[8] SIDE was trained using the references in existing ‘Featured Articles‘ on Wikipedia (the 8,000+ best quality articles on Wikipedia) to help flag citations where the citation was unlikely to support the statement or claim being made. Then SIDE would search the web for better alternative citations which would be better placed to support the claim being made in the article.

The paper’s authors observed that for the top 10% of citations tagged as most likely to be unverifiable‘ by SIDE, human editors preferred the system’s suggested alternatives compared with the originally cited reference 70% of the time‘.[8]

What does this mean?

“Wikipedia lives and dies by its references, the links to sources that back up information in the online encyclopaedia. But sometimes, those references are flawed — pointing to broken websites, erroneous information or non-reputable sources.” [7]

This use case could, theoretically, save time for editors in checking the accuracy and verifiability of citations in articles BUT computational scientist at the University of Zurich, Aleksandra Urman, warns that this would only be if the system was deployed correctly and “what the Wikipedia community would find most useful”.[8]

Indeed, practical implementation and actual usefulness remains to be seen BUT the potential there is acknowledged by some within the Wikimedia and open education space:

“This is a powerful example of machine learning tools that can help scale the work of volunteers by efficiently recommending citations and accurate sources. Improving these processes will allow us to attract new editors to Wikipedia and provide better, more reliable information to billions of people around the world.” – Dr. Shani Everstein Sigalov, educator and Free Knowledge advocate.

One final note is that Urman pointed out that Wikipedia users testing the SIDE system were TWICE as likely to prefer neither of the references as they were to prefer the ones suggested by SIDE. So the human editor would still have to go searching for the relevant citation online in such instances.

Point 3: ChatGPT and Wikipedia

Do people trust ChatGPT more than Google Search and Wikipedia?

No, thankfully. A focus group and interview study published in 2024 revealed that not all users trust ChatGPT-generated information as much as Google Search and Wikipedia.[9]

Has the emergence and use of ChatGPT affected engagement with Wikipedia?

In November 2022, ChatGPT was released to the public and quickly became a popular source of information, serving as an effective question-answering resource. Early indications have suggested that it may be drawing users away from traditional question answering services.

A 2024 paper examined Wikipedia page visits, visitor numbers, number of edits and editor numbers in twelve Wikipedia languages. These metrics were compared with the numbers before and after the 30th of November 2022 when ChatGPT released. The paper’s authors also developed a panel regression model to better understand and quantify any differences. The paper concludes that while ChatGPT negatively impacted engagement in question-answering services such as StackOverflow, the same could not be said, as of yet, to Wikipedia. Indeed, there was little evidence of any impact on edits and editor numbers and any impact seems to have been extremely limited.[10]

Wikimedia CEO Maryana Iskander states,

“We have not yet seen a drop in page views on the Wikipedia platform since ChatGPT launched. We’re on it. we’re paying close attention, and we’re engaging, but also not freaking out, I would say.”[11]

Do Wikipedia editors think ChatGPT or other AI generators should be used for article creation?

“AI generators are useful for writing believable, human-like text, they are also prone to including erroneous information, and even citing sources and academic papers which don’t exist. This often results in text summaries which seem accurate, but on closer inspection are revealed to be completely fabricated.”[12]

Author of Should You Believe Wikipedia?: Online Communities and the Construction of Knowledge, Regents Professor Amy Bruckman states large language models are only as good as their ability to distinguish fact from fiction… so, in her view, they [LLMs] can be used to write content for Wikipedia BUT only ever as a first draft which can only be made useful if it is then edited by humans and the sources cited checked by humans also.[12]

“Unreviewed AI generated content is a form of vandalism, and we can use the same techniques that we use for vandalism fighting on Wikipedia, to fight garbage coming from AI.” stated Bruckman.[12]

Wikimedia CEO Maryana Iskander agrees,

“There are ways bad actors can find their way in. People vandalize pages, but we’ve kind of cracked the code on that, and often bots can be disseminated to revert vandalism, usually within seconds. At the foundation, we’ve built a disinformation team that works with volunteers to track and monitor.“[11]

For the Wikipedia community’s part, a draft policy setting out the limits of usage of artificial intelligence on Wikipedia in article generation has been written to help editors avoid any copyright violations being posted on a open-licensed Wikipedia page or anything that might open Wikipedia volunteers up to libel suits. While at the same time the Wikimedia Foundation’s developers are creating tools to aid Wikipedia editors to better identify content online that has been written by AI bots. Part of this is also the greater worry that it is the digital news media, more than Wikipedia, that may be more prone to AI-generated content and it is these hitherto reliable news sources that Wikipedia editors would like to cite normally.

“I don’t think we can tell people ‘don’t use it’ because it’s just not going to happen. I mean, I would put the genie back in the bottle, if you let me. But given that that’s not possible, all we can do is to check it.”[12]

As what is right or wrong or missing on Wikipedia spreads across the internet then the need to ensure there are enough checks and balances and human supervision to avoid AI-generated garbage being replicated on Wikipedia and then spreading to other news sources and other AI services means we might be in a continuous ‘garbage-in-garbage-out’ spiral to the bottom that Wikimedia Sweden‘s John Cummings termed the Habsburg AI Effect (i.e. a degenerative ‘inbreeding’ of knowledge, consuming each other in a death loop, getting progressively and demonstrably worse and more ill each time) at the annual Wikimedia conference in August 2024. Despite Wikipedia and Google’s interdependence, the Wikipedia community itself is unsure it wants to enter any kind of unchecked feedback loop with ChatGPT whereby OpenAI consumes Wikipedia’s free content to train its models to then feed into other commercial paywalled sites when ChatGPT’s erroneous ‘hallucinations’ might have been feeding, in turn, into Wikipedia articles.

It is true to say that while Jimmy Wales has expressed his reluctance to see ChatGPT used as yet (“It has a tendency to just make stuff up out of thin air which is just really bad for Wikipedia — that’s just not OK. We’ve got to be really careful about that.”)[13] other Wikipedia editors have expressed their willingness to use it get past the inertia and “activation energy” of the first couple of paragraphs of a new article and, with human supervision (or humans as Wikipedia’s “special sauce”, if you will), this could actually help Wikipedia create greater numbers of quality articles to better reach its aim of becoming the ‘sum of all knowledge’.[14]

One final suggestion posted on the Wikipedia mailing list has been the use of the BLOOM large language model which makes use of Responsible AI Licences (RAIL)[15]

“Similar to some versions of the open Creative Commons license, the RAIL license enables flexible use of the AI model while also imposing some restrictions—for example, requiring that any derivative models clearly disclose that their outputs are AI-generated, and that anything built off them abide by the same rules.”[12]

A Wikimedia Foundation spokesperson stated that,

“Based on feedback from volunteers, we’re looking into how these models may be able to help close knowledge gaps and increase knowledge access and participation. However, human engagement remains the most essential building block of the Wikimedia knowledge ecosystem. AI works best as an augmentation for the work that humans do on our project.”[12]

Point 4: How Wikipedia can shape the future of AI

WikiAI?

In Alek Tarkowski’s 2023 thought piece he views the ‘existential challenge’ of AI models becoming the new gatekeepers of knowledge (and potentially replacing Wikipedia) as an opportunity for Wikipedia to think differently and develop its own WikiAI, “not just to protect the commons from exploitation. The goal also needs to be the development of approaches that support the commons in a new technological context, which changes how culture and knowledge are produced, shared, and used.”[16] However, in discussion at Wikimania in August 2024, this was felt to be outwith the realms of possibility given the vast resources and financing this would require to get off the ground if tackled unilaterally by the Foundation.

Blacklisting and Attribution?

For Chris Albon, Machine Learning Director at the Wikimedia Foundation, using AI tools has been part of the work of some volunteers since 2002. [17] What’s new is that there may be more sites online using AI-generated content. However, Wikipedia has existing practice of blacklisting sites/sources once it has become clear they are no longer reliable. More concerning is the emerging disconnect whereby AI models can provide ‘summary’ answers to questions without linking to Wikipedia or providing attribution that the information is coming from Wikipedia.

“Without clear attribution and links to the original source from which information was obtained, AI applications risk introducing an unprecedented amount of misinformation into the world. Users will not be able to easily distinguish between accurate information and hallucinations. We have been thinking a lot about this challenge and believe that the solution is attribution.”[17]

Gen-Z?

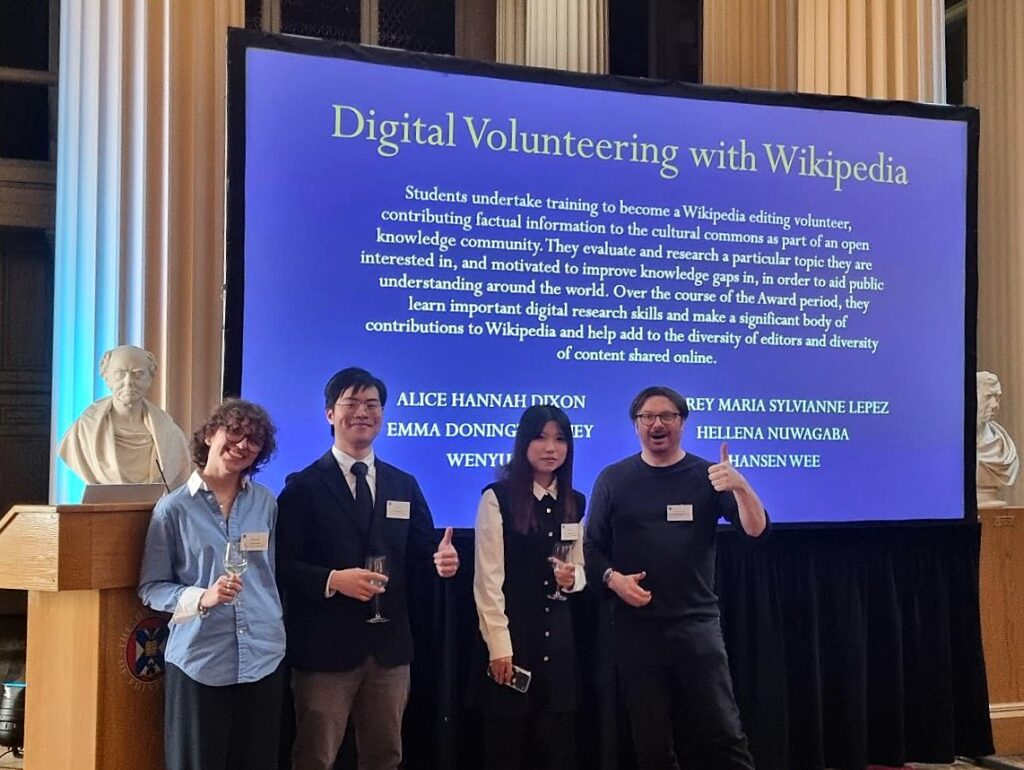

For Slate writer, Stephen Harrison, while a significant number of Wikipedia contributors are already gen Z (about 20% of Wikipedia editors are aged 18-24 according to a 2022 survey) there is a clear desire to increase this percentage within the Wikipedia community, not least to ensure the continuing relevance of Wikipedia within the knowledge ecosystem.[18] I.e. if Wikipedia becomes reduced to mere ‘training data’ for AI models then who would want to continue editing Wikipedia and who would want to learn to edit to carry on the mantle when older editors dwindle away? Hence, recruiting more younger editors from generation Z and raising their awareness of how widely Wikipedia content is used across the internet and how they can derive a sense of community and a shared purpose from sharing fact-checked knowledge, plugging gaps and being part of something that feels like a world-changing endeavour.[18]

WikiProject AI Cleanup

There is already an existing project is already clamping down on AI content on Wikipedia, according to Jiji Veronica Kim[19] Volunteer editors on the project are making use of the help of AI detecting tools to:

- Identify AI generates texts, images.

- Remove any unsourced claims

- Remove any posts that do not comply with Wiki policies.

“The purpose of this project is not to restrict or ban the use of AI in articles, but to verify that its output is acceptable and constructive, and to fix or remove it otherwise….In other words, check yourself before you wreck yourself.“.[19]

Point 5: Wikipedia as a knowledge destination and the internet’s conscience

Read More